Announcing SDI 2021.09.3450

September 23, 2021Updated Timeline for the first Synergy LTS Release

November 2, 2021Test-driven development (TDD) is a process wherein you write test cases (usually unit tests) for any features you are going to add before implementing them. After that you make small, incremental changes to your code and rerun the tests to verify they continue to pass. This is particularly useful when adding new features. See Unit Tests, the Key to Improving Quality and Developer Efficiency and Unit Testing in Traditional Synergy for more information on how to write unit tests in Synergy.

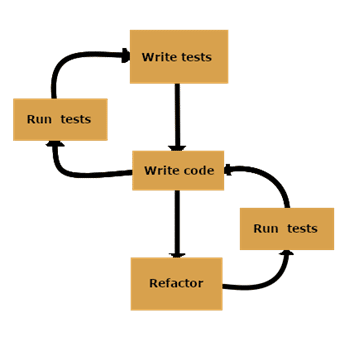

The basic steps to TDD are

- Write a test

- Run all tests and see what fails

- Write code changes

- Run tests

- Refactor

- Repeat

You start by writing a test for the desired feature and running your entire suite of tests (or only your new test if you are just starting out). The new test should fail, which proves that new code is needed. Then you write the simplest code possible that will implement the new feature and enable the test to pass. After this you rerun all tests with the expectation that they should all be passing now. Finally, you refactor code for readability and maintainability. This cycle is then repeated for each new piece of functionality to be added, until you eventually release your finished product. This cycle will look like this:

You might be thinking: “Why should I care about this? It sounds like you just moved the testing step from the end to the beginning.” By focusing on the testing effort first, you will ensure that all code being written is getting tested. It is easy to miss testing something if you write tests after having done all the implementation. This will naturally lead to tests being very thorough, catching any unexpected changes in behavior as new features are added. You are also working to define acceptance criteria whenever you create tests, ensuring that the feature is well-defined. The end goal of all this testing is to reach 100% test coverage!

In spite of all this focus on testing, it’s important to keep in mind that the tests themselves are only a means to an end. You are writing them as requirements that should be met. As such, tests should be small and incremental, with commits made frequently. This way, if a test fails, you can simply revert the commit instead of having to spend a lot of time debugging. Because no more code than necessary is being written to cause your failing tests to pass, it is much easier to track down errors that arise than if you were to write the tests after doing all the implementation. This will reduce time spent on debugging and ease the effort of coding new features. You have done all of the planning already by setting up your tests as the acceptance criteria!

TDD in Action

Let’s start with a simple example of how to work with TDD: Let’s say that you want to add a login page to a website. You know that you will need text boxes for the username and password, and an “OK” button for the user to attempt to log in. Following the TDD steps, you first write unit tests for these new pieces we know will be added to check if the login page works. Next you run your entire test suite, and the new tests will fail due to not having anything implemented yet. You then add code to make this test pass: the basic implementation of your button and text boxes. After this, you rerun the tests to check that your tests pass. Unfortunately, your basic implementation caused one of your homepage tests to fail. So now you go back and refactor your code to not cause the new failure and then run your tests again. You repeat this until you end up with a working login page, with boosted confidence that your changes have not caused errors elsewhere.

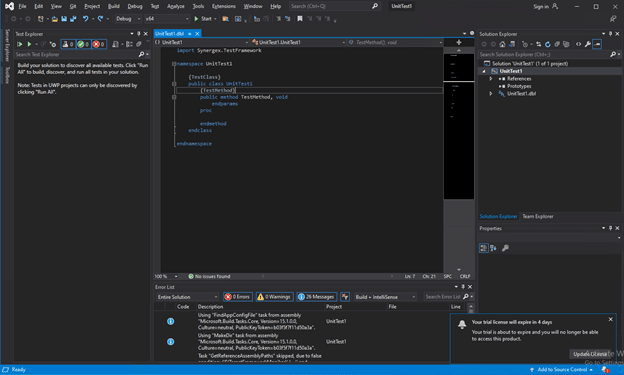

Synergex has used TDD on several recently added features. The one I’m going to talk about is the traditional unit test framework. We started with writing the tests for the new feature. With a final design that was already defined by Visual Studio, we knew exactly what the end product would look like, so writing these tests was much easier than normal.

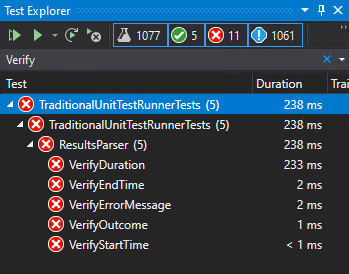

As expected, our tests failed when we ran them, so we set about writing new code to make them pass. This is just your normal development time spent writing the minimal amount of code to implement the feature and cause the tests to no longer fail.

After writing our code, we ran the tests again to verify that they passed. We did this one by one until all of the tests passed. At that point we went on to the refactoring step and trimmed duplicate code, moved code around, and rewrote parts to not be hard-coded. As we refactored the code, we ran the tests repeatedly to be able to revert a change if one of the tests started failing again. With this process we could be very confident that what we were putting out was of good quality.

After implementing test-driven development practices at Synergex, we’ve been able to catch bugs much earlier in the development cycle on several projects. As we continue to practice good TDD habits, we have developed a greater level of confidence in each of our releases. I hope this article has shown that test-driven development is a great asset to any team. I believe that this practice will greatly improve any team’s workflow.